Lights Out: Coronal Mass Ejections and EMPs

Blasts of electromagnetic energy can disable electronics.

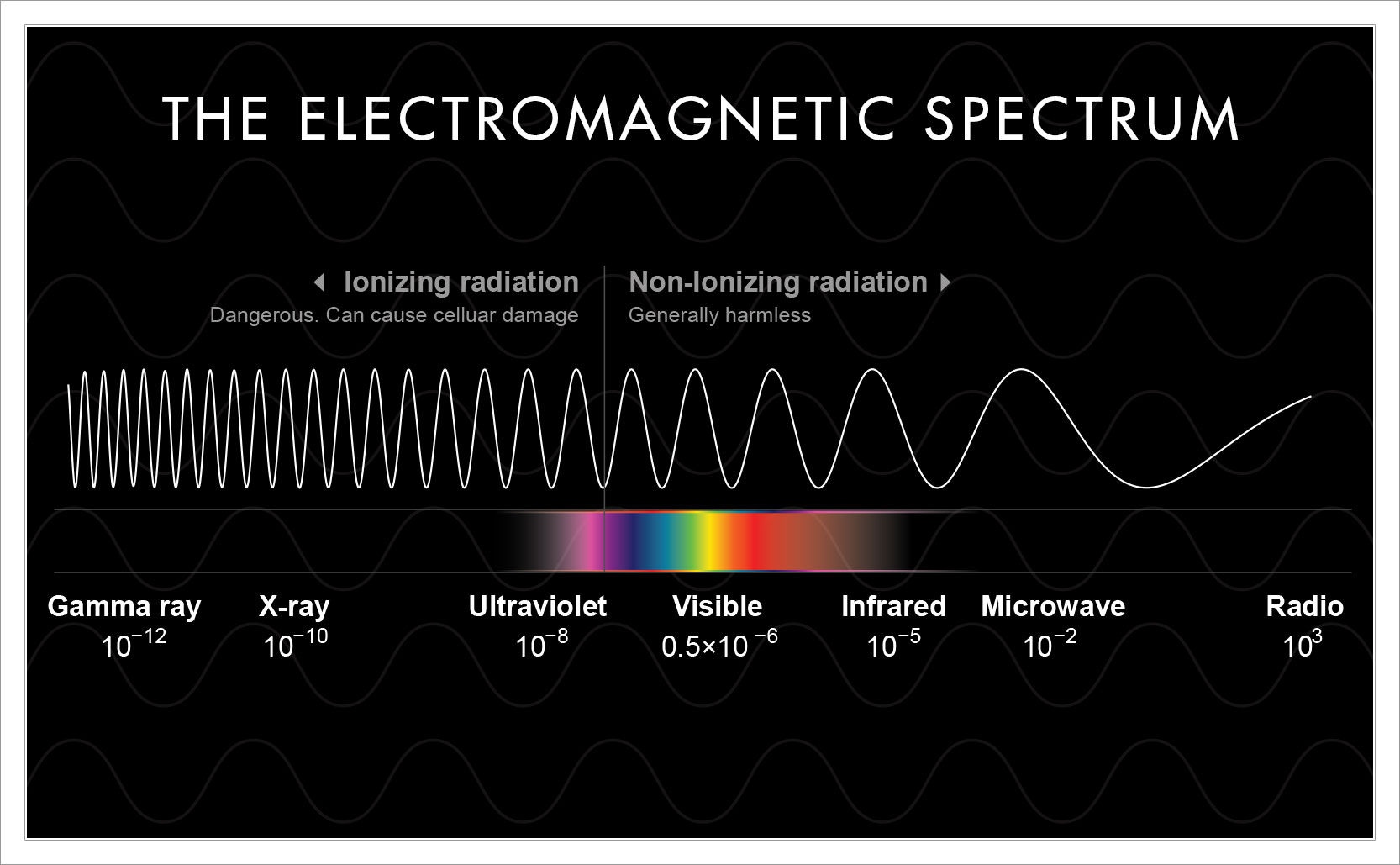

Electricity and magnetism go hand in hand – you can’t have a moving electrical charge without a magnetic field. This also means to make a change to the one affects the other. For example, run an electrical current near a compass and it will affect the magnetism of the compass and move the needle. This also works the other way around. Increase the magnetism near an electronic device and it can interfere with or damage the device.

Our electrical grids and our electronic devices are subject to interference by magnetic fields. The sun is the greatest magnetic force in our solar system and thankfully for our electronics it’s far away … for the most part.

Solar Flares and Coronal Mass Ejections

Solar flares are bursts of electromagnetic radiation from the sun – all energy, no matter. When they burst from the sun they can travel near the speed of light across space, picking up solar wind protons along the way. Like a tidal wave of radiation, when a solar flare bursts facing Earth it can hit in about 8 minutes. Most solar flares are harmless to life on Earth but more intense flares can disrupt GPS, endanger astronauts in space, and disrupt radio signals. One benefit of solar flares though is that they can serve as early warning signs of potential coronal mass ejections.

Coronal Mass Ejections (CMEs) are also bursts from the sun but, unlike solar flares, they are ejections of actual matter (plasma) as well as radiation. CMEs contain millions to billions of tons of charged particles that are sent from the sun into space. CMEs move at a more leisurely pace than solar flares which, if sent towards Earth, take a day or more to reach us.

Hitting the Earth with so many charged particles a CME can push Earth’s magnetosphere to the limit and create a geomagnetic storm. A geomagnetic storm can last for hours during which time a higher than normal amount of the sun’s magnetic energy reaches the surface of the planet. The greatest threat CMEs pose is to very long conductors of electricity such as the power grid. CMEs can hit power grids with more magnetic energy than they’re designed for causing blackouts and overload anything plugged into them.

Man-made EMPs

The sun isn’t the only potential source of widespread electronic disturbance. Electromagnetic pulse (EMP) weapons are man-made devices that can replicate solar CMEs but with more precision based on the kinds of electronics you want to disable.

Nuclear and non-nuclear EMP weapons can emit different kinds of frequency pulses targeting anything/everything from large electric conductors such as the power grid down to small conductors such as cars, phones, and personal electronics. EMP weapons can damage/disable your opponents infrastructure without destroying human life or buildings.

So, should I do something?

Should you begin prepping for for an end of days stone age electronics-free scenario? probably not. CMEs are natural occurrences that occur every few days. Big CMEs hit Earth about every 25 years. Energy companies know this and take steps to protect the grid from the effects of CMEs and to keep the power on. That said mega CME storms like the Carrington Event in 1859 occur every few centuries, and if one comes along there’s not much you can do about it.

As for EMP weapons, it depends on how likely you think where you live will be attacked. Prepper websites will scare you into thinking you need to safeguard against these potential attacks. If you want to start putting electronics into faraday bags or lining your home with tinfoil, feel free.

Added info: one benefit from Solar Flares and CMEs are more intense aurora light shows.