Labyrinths & Mazes

Labyrinths are made for contemplation while mazes are made for confusion.

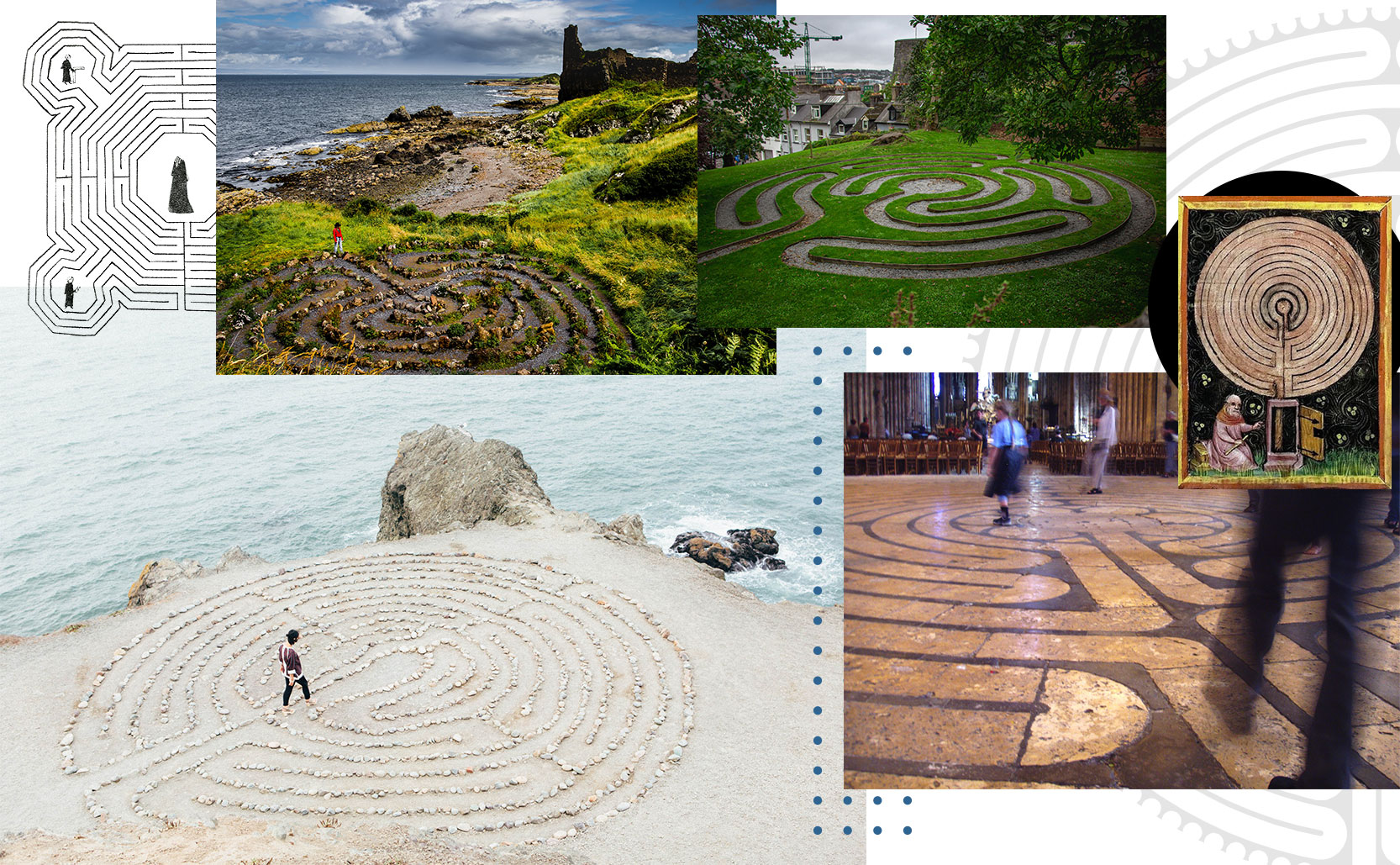

The terms “labyrinth” and “maze” are used fairly interchangeably but they’re quite different. A labyrinth is a single unicursal path without choices – you keep walking forward and it will lead you out. A maze is the opposite. Mazes are multicursal puzzles filled with choices of where you could go. Mazes are designed to get you lost, labyrinths make it impossible to get lost.

One way out

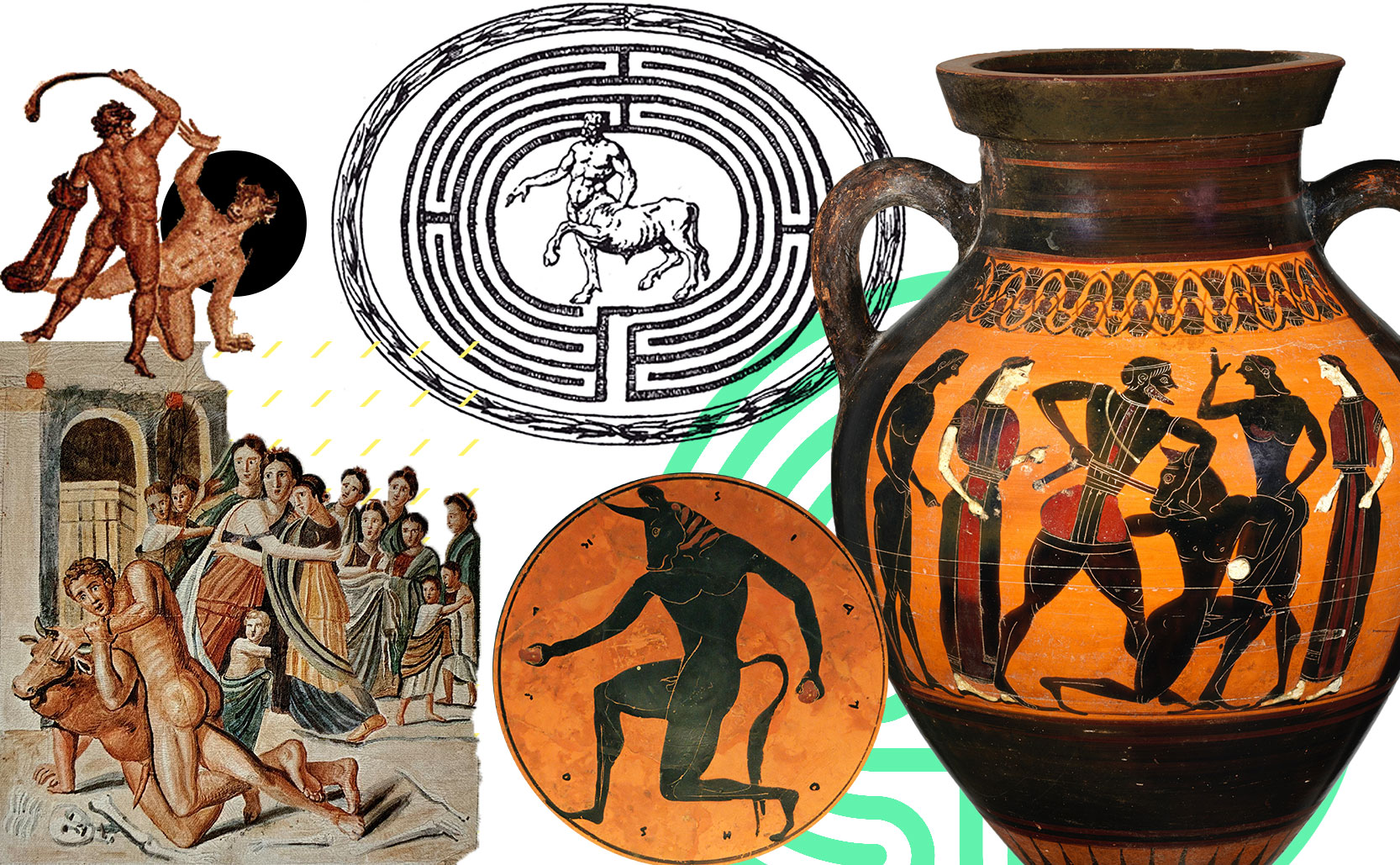

Perhaps the most famous labyrinth is that of the Minotaur in Greek legend. The part-man part-bull Minotaur was said to live in a labyrinth designed by Daedalus. While the myth says “labyrinth” and contemporary illustrations showed the Minotaur at the center of a labyrinth, it was actually a maze. Reading the story it was cleverly designed to confuse (and trap) those who entered which is inline with a maze rather than a labyrinth.

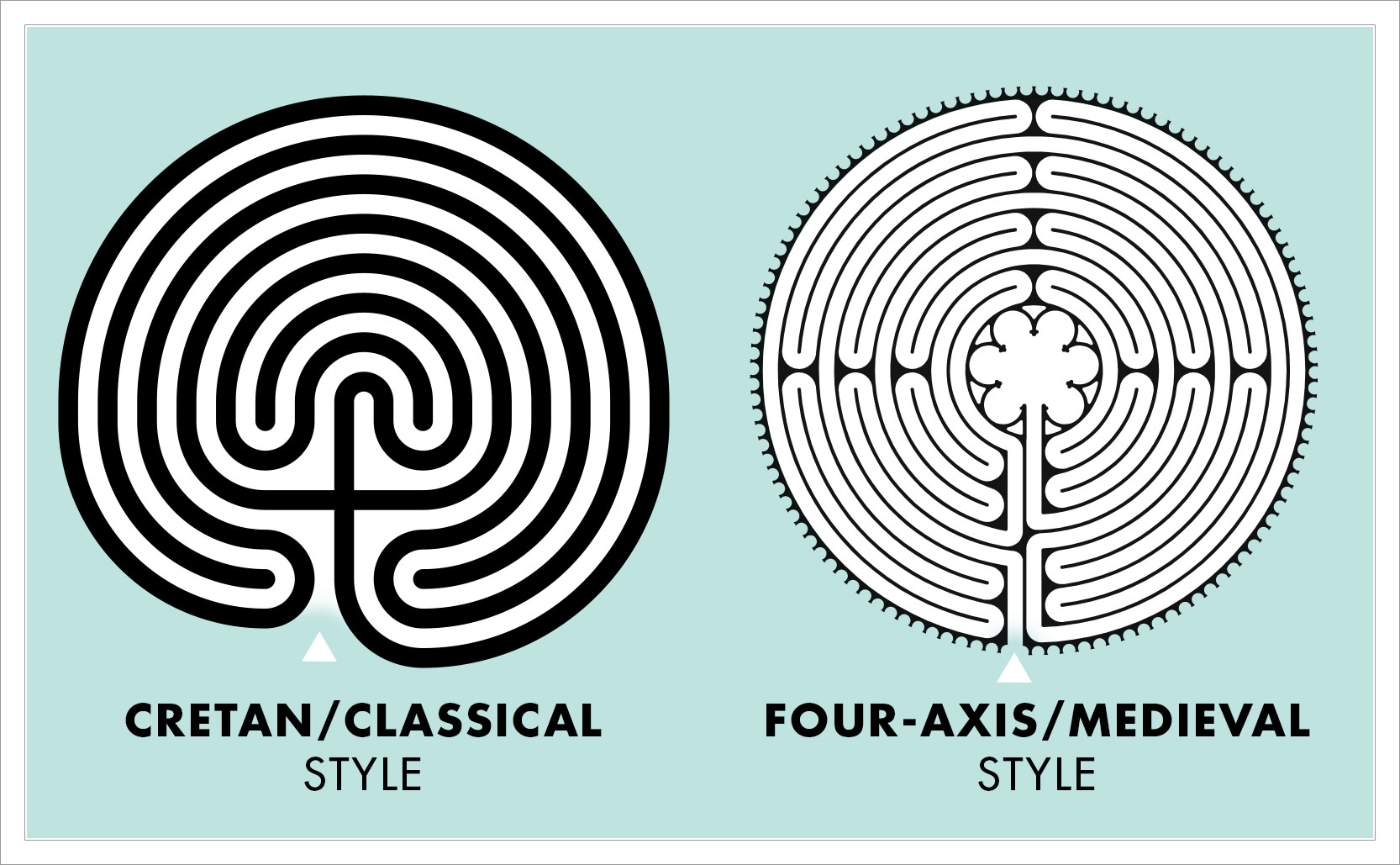

Typical labyrinths wind back and forth from the outside to the center and then back out again, following a single path. There are twists & turns but no choices, you simply keep walking forward. Labyrinths have two popular frameworks: the Cretan/Classical style and the four-axis/Medieval design. The four-axis design was created during the Middle Ages and was popularized in the cathedral floors of northern France. Chartres Cathedral is the most famous example of a four-axis labyrinth design, a design which has been copied around the world (Chatham Massachusetts has an outdoor copy, Grace Cathedral in San Francisco has one, etc).

Adding to the confusion of the difference between labyrinths and mazes are turf mazes … which are actually labyrinths. In Northern Europe and the British Isles turf mazes are outdoor labyrinths made of short-cropped grass and sometimes stones. Their designs are similar to the ones found in Medieval cathedrals and were also made to be walked.

Walk the path

As to the purpose of labyrinths, there isn’t a single definitive answer. Some say they were easier alternatives than making religious pilgrimages to holy sites. Instead of traveling to a distant land you could pray as you walked the path of a labyrinth close to home. Labyrinths in this context were spiritual paths to God. Some labyrinths were built as entertainment for children. That said while the labyrinth of the Reims Cathedral was designed for spiritual reasons it was removed in 1779 because the priests felt children were having too much fun on the labyrinth during church services. Fishermen of Sweden believed that turf mazes could trap evil spirits, freeing the men to only have good luck on their trips out to sea. The late 20th century had a resurgence in labyrinth popularity which took on an additional New Age spiritual purpose.

Beyond the spiritual, labyrinths can have physical & psychological benefits. Typically found in quiet semi-secluded settings, labyrinths can help calm the mind through mindful meditation. During the pandemic they were a free outdoor resource for people looking to recenter themselves. Walking a labyrinth can trigger the relaxation response which has the benefits of reducing blood pressure and lowering stress levels.

Land of confusion

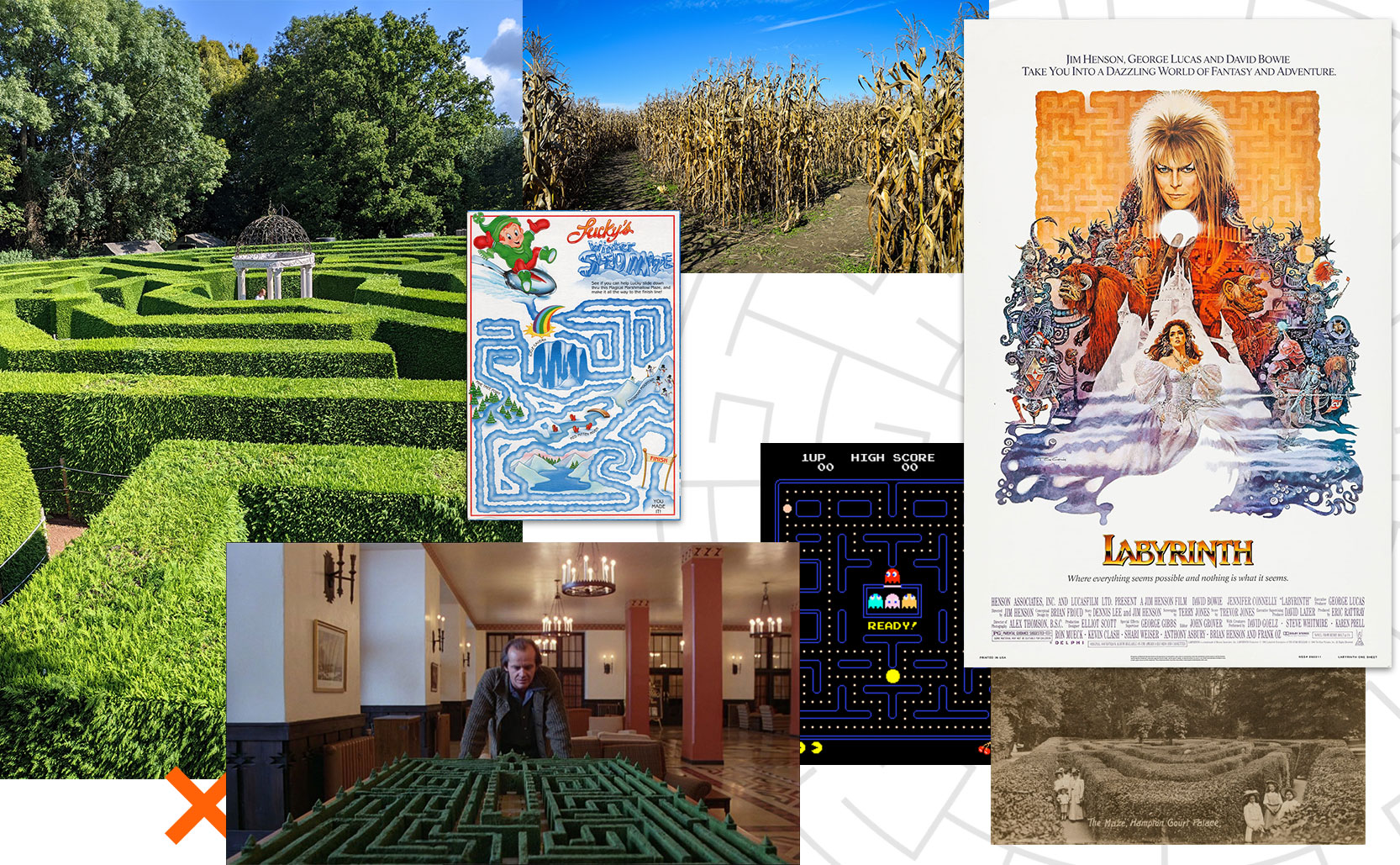

From the calm mindfulness of labyrinths to the chaos of mazes. Mazes are puzzles. Unlike labyrinths where the correct path is always in front of you, mazes offer many options of alternate directions. Labyrinths are freedom from choices where mazes are nothing but choices (most of which are wrong).

As evidenced in the story of the Minotaur, mazes have existed for a very long time. As labyrinths grew in popularity in the Middle Ages so too did mazes. Even the word “maze” is from the Middle Ages meaning “delusion, bewilderment, confusion of thought”.

Hedge mazes were constructed/grown on European palace grounds as a fun novelty of the rich. The oldest surviving hedge maze in England is the six foot high Hampton Court Palace maze, planted between 1689-1695.

Corn mazes (or “maize mazes” as they are known in Britain, and variations of “maize labyrinths” in most other European languages) started in the early 1990s. The first corn maze was designed by famed maze creator Adrian Fisher and was commissioned by former Disney producer Don Frantz in Annville, Pennsylvania in 1993 (it was “Cornelius, the Cobasaurus”, a 3 acre dinosaur maze). Today farmers use GPS and drones to aid in the creation of corn mazes which generate considerable income. Treinen Farm in Lodi, Wisconsin estimate that they bring in 90% of their income from autumnal agrotourism (the corn maze, pumpkin patch, hayrides, etc).

I was lost but now I am found

The confusion of mazes can be frustrating, but it can also be rewarding. Since the late 19th century mazes have been used in science experiments to study animal psychology and the process of learning, and thereby how they may apply to humans. In 1882 John Lubbock wrote about how various insects could navigate simple mazes. The iconic idea of rats in mazes began with Willard Small who, in 1901, documented his experiments of placing rats in mazes and observing their behavior. Small used the Hampton Court Palace maze as the inspiration for his rat maze.

Modern cities are typically laid out on rectangular grid systems making navigation fairly easy. Older cities are a different story. Older cities have grown more organically and don’t typically follow a structured grid. The Greek town of Mykonos however is a purposeful example of not being designed on a grid as it’s said to have been intentionally laid out to be confusing for invading pirates. They used the confusion of mazes as a defensive tactic.

Artificial Intelligence also owes a debt to mazes. Bringing the the legend of the Minotaur and rats in mazes together, mathematician Claude Shannon created “Theseus”, an electronic mouse designed to solve mazes. In 1950 Shannon constructed a rearrangeable maze wired with circuits. Placing Theseus in the maze the mouse would advance, encounter obstacles, and then relay the information to the computer. The computer in turn would learn about the maze and then tell Theseus which way to go. Theseus was the first artificial learning device in the world and one of the first experiments in artificial intelligence.

On and on

The enduring appeal of labyrinths and mazes is their mystery. The mystery of the self and the mystery of possibility. A maze is a puzzle to solve, in a labyrinth the puzzle to solve is yourself.

Added info: the etymology of the word “clue” is tied (as it were) to the story of the Minotaur. A “clew” was a ball of thread, like the one Ariadne gave to Theseus to help him find his way in the labyrinth of the Minotaur. Over time the spelling and meaning changed to the “clue” we use today, like the clue Ariadne gave to Thesus.